Psychology

We offer a well-resourced and stimulating intellectual environment for research, which includes competitive top-up scholarships, teaching fellowships and financial support to attend national and international conferences and other forms of professional development.

Our research students are vital contributors to our excellence in research. They enjoy a supportive and rigorous environment within both their area of specialisation and the wider school community.

Our researchers actively collaborate with other researchers across the University through multidisciplinary initiatives, including:

- the Brain and Mind Centre, where researchers work together on mental health, cognition and brain sciences

- the Charles Perkins Centre, which tackles the global issues of obesity, diabetes, cardiovascular disease and related conditions

- the Lambert Initiative for Cannabinoid Therapeutics, investigating the medicinal use of cannabinoids

- a world-renowned psycho‑oncology research group Psycho-oncology Cooperative Research Group

- Centre for Medical Psychology & Evidence-based Decision-making

Our research groups and labs

Clinical

Our clinical psychology research broadly examines the psychological, sociocultural, emotional, intellectual, neuropsychological and behavioural aspects of human functioning in an effort to promote understanding of various disorders, evidence based treatments, healthy development and adjustment.

Training and research clinics provide assessment and intervention for a range of presentations for the university and broader community, as well as facilitating research. Specialist clinics within the School include the Psychology Clinic, the Child Behaviour Research Clinic, the Gambling Treament and Research Clinic and the Healthy Brain Ageing Clinic.

- Assessment, maintaining factors and enhancing treatment of Social Anxiety Disorder

- Assessment, maintaining factors and enhancing treatment of Generalized Anxiety Disorder

- Meta-cognitive processes and anxiety disorders

- The maintenance and treatment of rumination in Social Anxiety Disorder

- Gambling Disorders

- Impulse control disorders

- Non substance behavioural addictions

- Gambling Research Treatment Clinic

- Placebo and nocebo effects

- Expectancy and treatment interactions

- Cue-induced craving for food and other drugs

- Developmental psychopathology

- Parenting and family processes

- Family therapy

- Childhood emotional and behavioural problems

- Learning theory

- Psychological genetics and epigenetics

- Child Behaviour Research Clinic

- Death anxiety among clinical populations

- Aetiological explanations for mental illness and their cascading effects

- Psychological challenges related to sexuality (e.g., LGBTQI, non-monogamy etc.)

- Social cognition and individual differences lab

- Gambling disorder and addictive behaviours (e.g., online gaming)

- Digital and cashless payments

- Electronic gaming machines and Internet gambling

- Harm minimization and prevention of addictions

- Role of emerging technology in mental health disorders

- Consumer-led interventions

- Gambling treatment including online and face-to-face options

- Stigma reduction

- Customised, targeted communications to reduce harms

- Director, Gambling Treatment and Research Clinic

- Founder and Leader, Technology Addiction Team

- Understanding the commonalities and differences in cognitive and neural development between children with and without mental health issues

- Effects of stressful and impoverished home environments on child development, particularly on cognitive, neural, and educational outcomes

- How to improve transfer from is learned and experienced in therapy to the "real-world"

- Seedling Lab

- Visual perception and memory in temporal lobe disorders

- Scene perception and scene construction

- Short-term memory processes in early dementia

- Visual Cognition Lab

- Early childhood development and psychopathology

- Antisocial behaviour and conduct problems across the lifespan

- Parenting processes & the parent-child relationship (including attachment)

- Family-based interventions (including parent training)

- Emotional and moral development, empathy, callous-unemotional traits

- Attentional biases and attentional mechanisms

- Disorders of visual imagery and visual memory

- Palinopsia (persisting afterimages)

- Assessment, risk and protective factors, and interventions targeting school-based bullying

- Assessment, risk and protective factors, and interventions targeting adolescent anxiety and depression

- Cross cultural aspects of mental health and wellbeing

- Overcoming barriers to mental health care access and use

- Memory processes in dementia

- Prospection in dementia

- Anhedonia and apathy in dementia

- Social communication in dementia

- Using virtual reality tools to assess and treat mood and cognitive disorders.

- Assessing a smart-phone app delivered alcohol sobriety program.

- Assessing the effect of junk food on self-control, and its role in obesity.

- Doctor-patient-family communication and development/evaluation of decision-making resources

- Psychosexual adjustment and quality of life (cancer, HIV)

- Evaluating a psychological assessment template of women considering risk‐reducing mastectomy

- Hoarding disorder

- Social cognition

- Face processing

- Emotion perception

- Ageing and dementia

- Neuroimaging

- Memory: emotion memory, episodic memory, semantic memory

- Neuropsychological rehabilitation

- Nature and mechanism of neuropsychological disorders arising from brain insults

- Memory deficits in neurological disorders

- child neuropsychology

- sleep and treatments of sleep disorders

- social cognition

- Emotion regulation

- Emotional intelligence

- Coping

- Appraisal theories of emotion

- Psychopathy – the cognitive, neural and genetic roots

- Anxiety from a perspective of basic cognitive function (associative learning and attention)

- Childhood obesity – psychological perspectives

- Seedling Lab

- Ageing and dementia

- Depression

- Parkinson’s Disease

- Early intervention

- Cognitive training

- Sleep and circadian rhythms

- Neuroimaging

- E-health

- Healthy Brain Ageing Clinic

- The nature and treatment of anxiety disorders, mood disorders, and complex trauma

- Cognitive and emotional processes underpinning complex mental health disorders, and enhancing cognitive therapy interventions for these populations

- Imagery in the maintenance of mental health disorders, and imagery-based interventions such imagery rescripting

- The role of attachment in the aetiology of psychopathology

- Understanding the unique challenges of youth mental health and effectively tailoring treatment for these populations

- Schema therapy models and application to a range of complex mental health disorders

- The effects of stress/trauma on memory

- The impact of post-incident debriefing on psychological wellbeing

- iWitnessed

- Forensic Psychology Lab

- Not Guilty: Sydney Exoneration Project

- Frontotemporal dementia

- Dementia: Early diagnosis and prognosis

- Social cognition

- Neuroimaging

- FRONTIER Lab

- Romantic relationships and wellbeing

- Social comparison

- Weight stigma

- Social control of health behaviours

- Embodiment, feminism and eating disorders

- Decolonisation of clinical psychology

- Community based approached to mental health

- Art-based approached to mental health education and activism; including poetic inquiry, creative writing and fine arts

- Sociopolitical approaches to mental health

- The efficacy of cognitive and/or behavioural treatments in the management of chronic pain and rheumatoid arthritis

- Understanding the process of adjustment in patients with rheumatoid arthritis and other illnesses

- The role of hypervigilance in the development, maintenance, prevention and treatment of chronic pain

- Schizotypal personality disorder

- Psychopathy and the Dark Triad of personality

- Psychology & psychopathology of religion and spirituality

- Pain and co-occurring physical and mental health difficulties

- Cognitive and emotional processes underpinning disability associated with pain, anxiety, trauma, sleep difficulties, diabetes, and cancer

- Cognitive processing biases including attention, interpretation, and expectancy biases

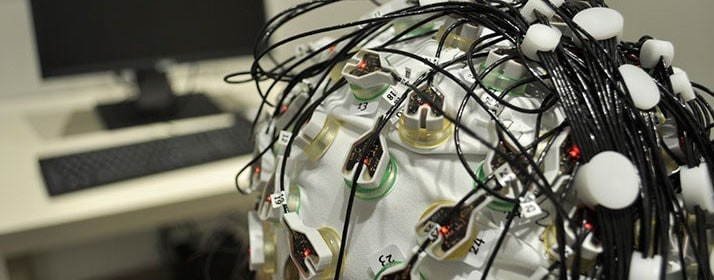

- Cognitive bias measurement techniques, including reaction time and accuracy-based computer tasks, eye-tracking, and neuropsychological assessment through EEG, SSVEP

- Developing new techniques to modify cognitive processing biases, to augment clinical interventions

Eating disorders from the perspective of:

- clinical psychology

- psychiatry

- neuropsychology

- behavioural medicine

- Child Behaviour Research Clinic

- Clinical Psychology Unit

- Forensic Psychology Lab

- Gambling Research Treatment Clinic

- iWitnessed

- Not Guilty: Sydney Exoneration Project

- Psychopharmacology Lab

- SEEDLing Lab

- Social cognition and individual differences lab

- Sydney Placebo Lab

- The Lambert Initiative for Cannabinoid Therapeutics

- Visual Cognition Lab

Cognition

Cognitive psychology explores the internal brain processes that people use to store, process, retrieve, transform and use information to interpret objects and events in the world and to solve problems, make decisions, speak and act.

- Cognitive training and self-regulatory mediators

- Working memory

- Fluid intelligence and reasoning

- Cognitive Individual Differences and Training Lab

- How streaks of events affect decision making

- Biases in the interpretation of financial data

- Hormonal influences on risky choices

- Cognitive illusions in reasoning

- Complex problem solving

- Neural Coding

- Functional Magnetic Resonance imaging (fMRI)

- Magnetoencephalography (MEG)

- Multivariate Pattern Analysis (Brian decoding)

- Object and Face recognition.

- Body Perception

- Social Perception

- Visual Attention

- Monitoring and control of thoughts and emotions (metacognition)

- Cognitive, educational, and affective psychology

- Advanced statistical modelling including multi-level modelling, growth curve modelling, and meta-analytic methods

- The nature and acquisition of knowledge in children and adults

- Concept & language learning

- Science and math education

- Seedling Lab

- Object recognition and interpreting object orientation

- Visual attention and selection

- Capacity limits in encoding visual information, repetition blindness, attentional blink

- Visual Cognition Lab

- Memory processes in dementia

- Prospection in dementia

- Anhedonia and apathy in dementia

- Social communication in dementia

- Social cognition

- Face processing

- Emotion perception

- Ageing and dementia

- Neuroimaging

- Memory: emotion memory, episodic memory, semantic memory

- Discrimination learning and stimulus generalisation

- Inductive reasoning

- Individual differences in rule learning

- Causal learning and causal illusions

- Fear conditioning and fear generalisation

- Implicit learning and automaticity

- Associations and reasoning in causal learning

- Action preparation and cognitive control

- Transcranial Magnetic Stimulation Lab

- Ageing and dementia

- Depression

- Parkinson’s Disease

- Early intervention

- Cognitive training

- Sleep and circadian rhythms

- Neuroimaging

- E-health

- Healthy Brain Ageing Clinic

- The involuntary capture of visual and auditory attention

- Top-down modulation of attentional capture

- Inattentional blindness

- Locus of selection in visual attention

- False memory and eye-witness testimony

- Gullibility, reasoning, and problem solving

- Eyewitness memory

- Interviewing strategies

- iWitnessed

- Forensic Psychology Lab

- Not Guilty: Sydney Exoneration Project

- Visual attention and selection

- The influence of learned relationships on attentional capture and control

- The interaction between attention and decision-making

- The role of attentional biases in psychopathology

- Cognitive models of mental illness

- Frontotemporal dementia

- Dementia: Early diagnosis and prognosis

- Social cognition

- Neuroimaging

- FRONTIER Lab

- Machine learning and neural networks

- Neural coding and decoding

- Magnetic resonance spectroscopy (MRS)

- Functional magnetic resonance imagining (fMRI)

- Electroencephalography (EEG)

- Prediction coding

- Efficient encoding

- Perception (vision, audition, etc.)

- Attention

- Adaptation

- Working memory

- Reliability of children as eyewitnesses

- Eyewitness memory in children and adults

- False memories in forensic context

- iWitnessed

- Forensic Psychology Lab

- Not Guilty: Sydney Exoneration Project

Developmental

Developmental psychology is concerned with describing and explaining psychological changes that occur as individuals progress from conception to death. Such changes have many sources, including physical maturation, learning, social interaction and other experiences. Developmental Psychology is thus best described as an approach to psychological investigation which can concern itself with typical and atypical development in all domains of psychology, from language and cognition to emotion and social behaviour.

- Developmental psychopathology

- Parenting and family processes

- Family therapy

- Childhood emotional and behavioural problems

- Learning theory

- Psychological genetics and epigenetics

- Child Behaviour Research Clinic

- The nature and acquisition of knowledge in children and adults

- Concept & language learning

- Science and math education

- Seedling Lab

- Early childhood development and psychopathology

- Antisocial behaviour and conduct problems across the lifespan

- Parenting processes & the parent-child relationship (including attachment)

- Family-based interventions (including parent training)

- Emotional and moral development, empathy, callous-unemotional traits

- Understanding factors involved in school-based bullying

- Impact of epilepsy, epilepsy surgery and head injury on memory and learning ability in children

- Cognitive fatigue, executive functions and social/moral reasoning in prematurely born children or children who have sustained a head injury

- The development of psychopathy, behavioural genetics and epigenetic processes

- The developmental mechanisms of disorders, childhood obesity

- Seedling Lab

- Developmental aspects of prejudice and discrimination

- The SUPIR Lab

- Reliability of children as eyewitnesses

- Eyewitness memory in children and adults

- False memories in forensic context

- iWitnessed

- Not Guilty: Sydney Exoneration Project

Forensic

Forensic psychology is the application of psychological knowledge and theories to all aspects of the justice system, including the processes and the people.

- Eyewitness memory

- Lie detection

- iWitnessed

- Not Guilty: Sydney Exoneration Project

- Forensic Psychology Lab

- Reliability of children as eyewitnesses

- Eyewitness memory in children and adults

- False memories in forensic context

- iWitnessed

- Not Guilty: Sydney Exoneration Project

- Forensic Psychology Lab

Health

Health psychology relates broadly to questions about how people stay physically well, and how to optimise their experience and that of their families, when they become ill. Overall, Health Psychologists study the factors which promote and maintain good health and prevent illness, lead people to take up optimal screening to detect illness at an early stage (such as mammograms for the detection of breast cancer), and ensure early and accurate diagnosis, effective treatment, good psychological adjustment to acute and chronic illness, optimal quality of life and optimal end-of-life care.

Health behaviours are key to good health, and are amendable to psychological interventions, so these are a key interest for health psychologists. Health psychologists are also interested in the analysis and improvement of the health care system and health policy formation.

- Risk perception

- Psycho-oncology

- Medical decision-making

- Stress and burnout in cancer health professionals

- Medical and health communication

- Decision aids to assist decision making for cancer patients

- Centre for Medical Psychology & Evidence-based Decision-making

- Psycho-oncology Cooperative Research Group

- How are placebo and nocebo effects formed?

- How long do placebo and nocebo effects last?

- How do cues influence reward-seeking behaviour?

- Do we need to be aware for learning to occur?

- How does variability affect our learning?

- Health-related quality of life

- Measurement issues in health-related quality of life

- Caregiver involvement in oncology

- Genes by environment interactions and health

- Cognitive decline and Alzheimer's disease

- Health and risk communication and decision making

- Social cognition and individual differences lab

- Psycho-oncology

- Cancer-related cognitive impairment

- Quality of life outcomes of cancer patients

- Medical and health communication

- Health literacy

- Medical decision-making

- Doctor-patient communication

- Quality of life outcomes of cancer patients

- HPV vaccination: psychological impact

- Quality of life outcomes of cancer patients

- Measurement issues in health-related quality of life (HRQOL) and other self-reported health outcomes (PROs)

- Optimising study design and data quality of HRQOL/PROs endpoints in clinical studies, including randomised trials and longitudinal studies

- Patient preferences and utility estimation in health context, particularly cancer

- Sydney Quality of Life Office

- Psycho-oncology

- Medical decision-making

- Health professional-patient communication

- Family caregivers

- Qualitative research

- Social cognition

- Social comparison

- Close relationships

- Social control of health behaviours

- Psychological impact of disease

- Development of interventions to facilitate adjustment to illness

- Evaluation of interventions for preventing physical and psychological morbidity in patients with ill health

- Psycho-oncology

- Medical and health communication

- Psychophysiology

- Communicating bad news

- Medical decision-making

- Psychological intervention development and evaluation

- Clinical trial consent

- Pain and co-occurring physical and mental health difficulties

- Cognitive and emotional processes underpinning disability associated with pain, anxiety, trauma, sleep difficulties, diabetes, and cancer

- Cognitive processing biases including attention, interpretation, and expectancy biases

- Cognitive bias measurement techniques, including reaction time and accuracy-based computer tasks, eye-tracking, and neuropsychological assessment through EEG, SSVEP

- Developing new techniques to modify cognitive processing biases, to augment clinical interventions

Learning

The psychology of learning is concerned with understanding how experience shapes behaviour. Learning research with humans and other animals examines the effect of external stimuli and events, internal physical states, motivation, attention and higher order cognition on the performance of a wide range of simple and complex behaviours, from reflexive biological responses to reasoned decision making.

The study of learning seeks to reveal the theoretical, functional and neurophysiological underpinnings of these behavioural changes.

- Activity and body weight in rats or humans

- Flavour/odour preference/aversion learning in rats or humans

- Placebo effects

- Conditioning in rats or humans

- Australian Learning Group

- How are placebo and nocebo effects formed?

- How long do placebo and nocebo effects last?

- How do cues influence reward-seeking behaviour?

- Do we need to be aware for learning to occur?

- How does variability affect our learning?

- Simple associative learning

- Vision and touch perception

- Transcranial Magnetic Stimulation Lab

- Goal directed behaviour

- Habit formation

- Executive processes

- Discrimination learning and stimulus generalisation

- Inductive reasoning

- Individual differences in rule learning

- Causal learning and causal illusions

- Fear conditioning and fear generalisation

- The relationship between learning and attention

- Implicit learning and automaticity

- Discrimination learning and stimulus generalization

- Associations and reasoning in causal learning

- Australian Learning Group

- Visual attention and selection

- The influence of learned relationships on attentional capture and control

- The interaction between attention and decision-making

- The role of attentional biases in psychopathology

- Cognitive models of mental illness

- Dopamine

- Lateral hypothalamus

- Neural circuits of appetitive and aversive learning

- Rodent models of addiction and schizophrenia

Method and theory

This group is concerned with the philosophical, theoretical and methodological aspects of research in psychology. These include: the analysis of philosophical and theoretical assumptions that underpin psycho-social research; theory construction; the concept of measurement; evaluating research designs, research types, and the use of descriptive and inferential statistics.

- Measurement of Cognitive Abilities

- Rasch Measurement

- Multi-level Models

- Cognitive Individual Differences and Training Lab

- Measurement issues in health-related quality of life

- Measurement issues in psycho-oncology

- Social cognition and disagreement

- Autism

- Neurocognitive bases of discrimination

- intergroup processes

- Decision making

- Neuroimaging (fMRI, EEG, fNIRS)

- Philosophy of mind and theory-building

- Qualitative approaches to measuring health-related quality of life

- Qualitative research in psycho-oncology

- Monitoring and control of thoughts and emotions (metacognition)

- Cognitive, educational, and affective psychology

- Advanced statistical modelling including multi-level modelling, growth curve modelling, and meta-analytic methods

- Reproducability in science

- Open access publishing

- Using multivariate techniques for individual differences research

- CODES Lab

- Qualitative Research Methods

- Narrative Inquiry

- Critical and Post-Structural Psychology

- Participatory Action Research

- Philosophy and Clinical Psychology

- CODES Lab (Cognitive and Decision Sciences Research Lab)

- Cognitive Individual Differences and Training Lab

Neuroscience

Neuroscience is the study of the biological basis of all aspects of psychology, and is both a basic science and a clinical process to understand and treat psychological and psychiatric disorders. The scope of neuroscience is extensive and neuroscientists employ a wide range of techniques:

Studying the physiology of neural tissue, using animal models of behaviour to investigate the molecular biology and neurochemistry of fundamental psychological processes, and application of neuroimaging techniques to associate brain activity with human perception, action, attention, memory, language, emotion and mood.

- Activity and body weight in rats or humans

- Novel pharmacotherapies for psychiatric and neurological disorders

- Treatments targeting the brain oxytocin system

- Treatments targeting extrasynaptic GABAA receptors

- Substance-use disorders and social disorders (e.g. autism spectrum disorder)

- Novel neural systems involved in neurological and mental health disorders

- Cannabinoid therapeutics

- Cellular and animal models

- The Lambert Initiative for Cannabinoid Therapeutics

- Neural Coding

- Functional Magnetic Resonance imaging (fMRI)

- Magnetoencephalography (MEG)

- Multivariate Pattern Analysis (Brian decoding)

- Object and Face recognition.

- Body Perception

- Social Perception

- Visual Attention

- The anatomy and physiology of the vestibular system

- Vestibular loss and compensation

- Long-term potentiation (LTP)

- The role of the hippocampus in spatial memory

- The role of the hippocampus in spatial learning

- Social cognition and disagreement

- Autism

- Neurocognitive bases of discrimination

- intergroup processes

- Decision making

- Neuroimaging (fMRI, EEG, fNIRS)

- Philosophy of mind and theory-building

- Neural processes (using transcranial magnetic stimulation - TMS) underlying:

- object recognition

- Visual attention and selection

- Capacity limits in encoding visual information, repetition blindness, attentional blink

- Visual Cognition Lab

- Neural coding in tactile and visual systems

- Transcranial Magnetic Stimulation Lab

- Memory processes in dementia

- Prospection in dementia

- Anhedonia and apathy in dementia

- Social communication in dementia

- Psycho-neuroimmunology

- Neurochemistry of complex cognition

- Social cognition

- Face processing

- Emotion perception

- Ageing and dementia

- Neuroimaging

- Memory: emotion memory, episodic memory, semantic memory

- Neuropsychological rehabilitation

- Impact of neurological disorders and/or brain injury on psychological functioning

- Drug discovery

- Modulation of social behaviour by oxytocin and related systems

- Therapies for treatment of addiction

- Analytical chemistry

- Novel synthetic recreational drugs

- Pheromones and olfactory learning

- The Lambert Initiative for Cannabinoid Therapeutics

- Psychopharmacology Lab

- Ageing and dementia

- Depression

- Parkinson’s Disease

- Early intervention

- Cognitive training

- Sleep and circadian rhythms

- Neuroimaging

- E-health

- Healthy Brain Ageing Clinic

- Frontotemporal dementia

- Dementia: Early diagnosis and prognosis

- Social cognition

- Neuroimaging

- FRONTIER Lab

- Machine learning and neural networks

- Neural coding and decoding

- Magnetic resonance spectroscopy (MRS)

- Functional magnetic resonance imagining (fMRI)

- Electroencephalography (EEG)

- Prediction coding

- Efficient encoding

- Perception (vision, audition, etc.)

- Attention

- Adaptation

- Working memory

- Dopamine

- Lateral hypothalamus

- Neural circuits of appetitive and aversive learning

- Rodent models of addiction and schizophrenia

- Functional Neuro-Imaging.

- Adaptation and aftereffects

- Motion perception

- Search and eye-movements

- Optic flow, heading and moving observers

- Time perception

- Visual delusion

- Attention

- Virtual Reality

- History of cognitive neuroscience

Organisational

Organisational psychology focuses on the application of the research, theory and practice of psychology to the enhancement of life experience, work performance and development of organisations and groups. Coaching psychology encompasses executive coaching, workplace coaching, leadership development and personal coaching at both group and individual levels.

In coaching the key theoretical frameworks include solution-focused, cognitive-behavioural, and psychodynamic theory, complexity/systems theory and adult developmental theory.

- Coaching; workplace, life and health

- Meta-cognition and attention in self regulation and emotional regulation

- Client coach relationships

- Positive psychology, wellbeing and goal attainment

- Leadership and adult development

- Mindfulness

- Group functioning and team development

- The Coaching Psychology Unit

- Coaching; Workplace and Leadership

- Positive Organisational Change

- Positive psychology, wellbeing and goal attainment

- Leadership and Network Cognitions

- Social Network Analysis and Systems

- Technology and Self development

- The Coaching Psychology Unit

Perception

The process by which signals from the sensory periphery (receptors in the eyes, ears, skin etc) are interpreted and organised to produce a meaningful experience of the external world. By representing the objects and attributes of our surrounding environment, perception allows us to interact with our world.

We run an informal discussion group and journal club where we discuss a broad spectrum of topics in perception.

- Visual perception of motion & orientation

- Binocular rivalry

- Auditory movement

- Auditory localization during head movements

- interactions between visual and auditory movement

- audio-visual attention

- Models of cross-modal integration

- Perceptual organization

- Computation of three dimensional shape of surfaces

- Surface reflectance

- Material properties of surfaces

- Neural Coding

- Functional Magnetic Resonance imaging (fMRI)

- Magnetoencephalography (MEG)

- Multivariate Pattern Analysis (Brian decoding)

- Object and Face recognition.

- Body Perception

- Social Perception

- Visual Attention

- The anatomy and physiology of the vestibular system

- Vestibular loss and compensation

- Vestibulo-ocular reflex

- Eye movements, especially ocular torsion

- Linear acceleration and angular acceleration stimulation

- Vestibular Research Laboratory

- Processing letters and words

- Binding perceptual features

- Temporal aspects of perception and visual cognition

- Capacity limits on perceptual processing

- Machine learning and neural networks

- Neural coding and decoding

- Magnetic resonance spectroscopy (MRS)

- Functional magnetic resonance imagining (fMRI)

- Electroencephalography (EEG)

- Prediction coding

- Efficient encoding

- Perception (vision, audition, etc.)

- Attention

- Adaptation

- Working memory

- Vision Science, perception: motion perception, adaptation, attention, binocular vision, illusions, neuro-imaging, Artificial Intelligence

- Perceptual aspects of Advertising & Marketing Communication

Personality and intelligence

Our research focus is on the understanding of (a) trait theories of intelligence (including traditional notions of and emotional intelligence), metacognition and personality; (b) the core individual characteristics (cognitive/metacognitive abilities, normal and abnormal personality, mental and, religion and spirituality, and decision-making paradigms) that influence/predict different life outcomes; and (c) the ways these individual differences serve as the bases of much of contemporary psychological assessment in educational, clinical, cross-cultural, forensic, and organizational settings.

- Individual differences in Fluid Cognitive Functions

- Cognitive training and self-regulatory mediators

- Working memory

- Fluid intelligence and reasoning

- Psychometric Assessment

- Cognitive Individual Differences and Training Lab

- Monitoring and control of thoughts and emotions (metacognition)

- Cognitive, educational, and affective psychology

- Advanced statistical modelling including multi-level modelling, growth curve modelling, and meta-analytic methods

- Meta-cognition

- Decision-making

- Cognitive styles/thinking dispositions and their role in cognition

- Cognitive response-selection strategies and their role in academic achievements

- CODES Lab

- Emotional intelligence

- Coping with stress

- Methodological issues in personality assessment

- Response distortion in personality assessment

- Non-cognitive predictors of academic achievement

- Personality - the traits approach

- Adult attachment styles

- Psychology & psychopathology of religion & spirituality

- Cross-cultural psychological elements of faith

- CODES Lab (Cognitive and Decision Sciences Research Lab)

- Cognitive Individual Differences and Training Lab

Social

What makes social psychology social is that it focuses on how people are affected by other people. In particular, social psychology is the scientific investigation of attitudes, feelings and behaviour, and the interactions between these components. A fundamental goal of social psychology is to understand the factors that shape people's interpersonal relationships and their experiences in the social world.

- Social cognition

- Person perception

- Genes by environment interactions

- Existential psychology

- Judgment and decision making

- Gender

- Stereotypes and prejudice

- Social cognition and individual differences lab

- Social cognition and disagreement

- Autism

- Neurocognitive bases of discrimination

- intergroup processes

- Decision making

- Neuroimaging (fMRI, EEG, fNIRS)

- Philosophy of mind and theory-building

- Memory conformity

- The effects of group discussion on memory

- iWitnessed

- Not Guilty: Sydney Exoneration Project

- Forensic Psychology Lab

- Social cognition

- Social comparison

- Close relationships

- Social control of health behaviours

- Reliability of children as eyewitnesses

- Eyewitness memory in children and adults

- False memories in forensic context

- iWitnessed

- Not Guilty: Sydney Exoneration Project

- Forensic Psychology Lab

- Forms of prejudice/discrimination

- The reduction of intergroup bias/prejudice/discrimination

- Improving the measurement of intergroup bias/prejudice/discrimination

- The SUPIR Lab

- iWitnessed

- Forensic Psychology Lab

- Not Guilty: Sydney Exoneration Project

- Social cognition and individual differences lab

- The SUPIR Lab (Sydney University Psychology of Intergroup Relations Lab)